* reorganize ssz dependencies This PR continues the work in https://github.com/status-im/nimbus-eth2/pull/2646, https://github.com/status-im/nimbus-eth2/pull/2779 as well as past issues with serialization and type, to disentangle SSZ from eth2 and at the same time simplify imports and exports with a structured approach. The principal idea here is that when a library wants to introduce SSZ support, they do so via 3 files: * `ssz_codecs` which imports and reexports `codecs` - this covers the basic byte conversions and ensures no overloads get lost * `xxx_merkleization` imports and exports `merkleization` to specialize and get access to `hash_tree_root` and friends * `xxx_ssz_serialization` imports and exports `ssz_serialization` to specialize ssz for a specific library Those that need to interact with SSZ always import the `xxx_` versions of the modules and never `ssz` itself so as to keep imports simple and safe. This is similar to how the REST / JSON-RPC serializers are structured in that someone wanting to serialize spec types to REST-JSON will import `eth2_rest_serialization` and nothing else. * split up ssz into a core library that is independendent of eth2 types * rename `bytes_reader` to `codec` to highlight that it contains coding and decoding of bytes and native ssz types * remove tricky List init overload that causes compile issues * get rid of top-level ssz import * reenable merkleization tests * move some "standard" json serializers to spec * remove `ValidatorIndex` serialization for now * remove test_ssz_merkleization * add tests for over/underlong byte sequences * fix broken seq[byte] test - seq[byte] is not an SSZ type There are a few things this PR doesn't solve: * like #2646 this PR is weak on how to handle root and other dontSerialize fields that "sometimes" should be computed - the same problem appears in REST / JSON-RPC etc * Fix a build problem on macOS * Another way to fix the macOS builds Co-authored-by: Zahary Karadjov <zahary@gmail.com>

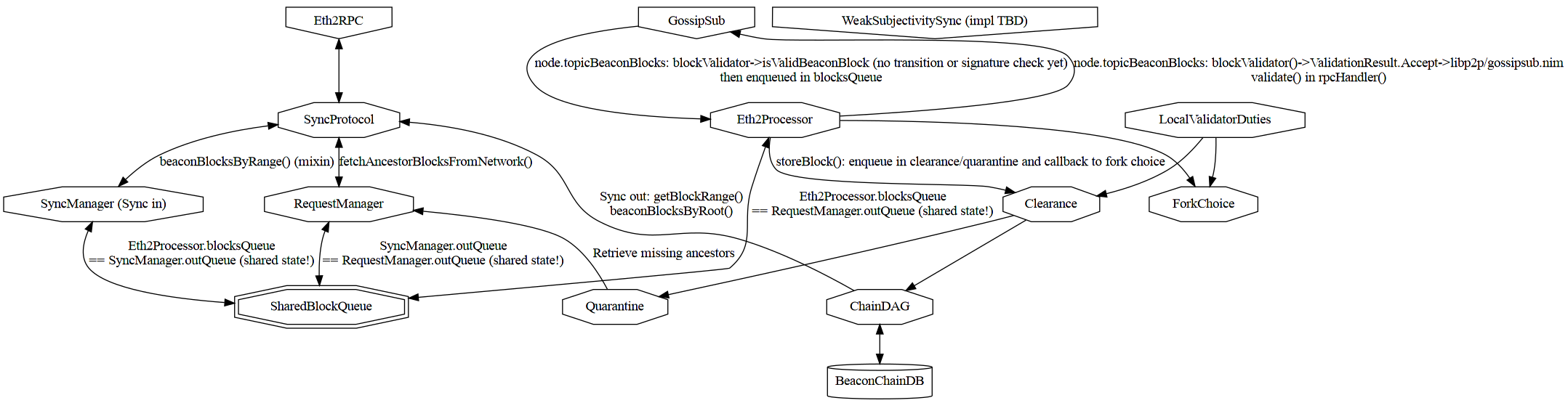

Block syncing

This folder holds all modules related to block syncing

Block syncing uses ETH2 RPC protocol.

Reference diagram

Eth2 RPC in

Blocks are requested during sync by the SyncManager.

Blocks are received by batch:

syncStep(SyncManager, index, peer)- in case of success:

push(SyncQueue, SyncRequest, seq[SignedBeaconBlock]) is called to handle a successful sync step. It callsvalidate(SyncQueue, SignedBeaconBlock)` on each block retrieved one-by-onevalidateonly enqueues the block in the SharedBlockQueueAsyncQueue[BlockEntry]but does no extra validation only the GossipSub case

- in case of failure:

push(SyncQueue, SyncRequest)is called to reschedule the sync request.

Every second when sync is not in progress, the beacon node will ask the RequestManager to download all missing blocks currently in quarantaine.

- via

handleMissingBlocks - which calls

fetchAncestorBlocks - which asynchronously enqueue the request in the SharedBlockQueue

AsyncQueue[BlockEntry].

The RequestManager runs an event loop:

- that calls

fetchAncestorBlocksFromNetwork - which RPC calls peers with

beaconBlocksByRoot - and calls

validate(RequestManager, SignedBeaconBlock)on each block retrieved one-by-one validateonly enqueues the block in theAsyncQueue[BlockEntry]but does no extra validation only the GossipSub case

Weak subjectivity sync

Not implemented!

Comments

The validate procedure name for SyncManager and RequestManager

as no P2P validation actually occurs.

Sync vs Steady State

During sync:

- The RequestManager is deactivated

- The syncManager is working full speed ahead

- Gossip is deactivated

Bottlenecks during sync

During sync:

- The bottleneck is clearing the SharedBlockQueue

AsyncQueue[BlockEntry]viastoreBlockwhich requires full verification (state transition + cryptography)

Backpressure

The SyncManager handles backpressure by ensuring that

current_queue_slot <= request.slot <= current_queue_slot + sq.queueSize * sq.chunkSize.

- queueSize is -1, unbounded, by default according to comment but all init paths uses 1 (?)

- chunkSize is SLOTS_PER_EPOCH = 32

However the shared AsyncQueue[BlockEntry] itself is unbounded.

Concretely:

- The shared

AsyncQueue[BlockEntry]is bounded for sync - The shared

AsyncQueue[BlockEntry]is unbounded for validated gossip blocks

RequestManager and Gossip are deactivated during sync and so do not contribute to pressure.