Normally, running LC and DAG sync at same time is fine, but on tiny devnet where some peer may not support the LC data, we can end up in situation where peer gets disconnected when DAG is in sync, because DAG sync never uses any req/resp on local devnet (perfect nw conditions) so the LC sync over minutes removes the peer as sync is stuck. We don't need to actively sync LC from network if DAG is already synced, preventing this specific low peer devnet issue (there are others still). LC is still locally updated when DAG finalized checkpoint advances. |

||

|---|---|---|

| .. | ||

| README.md | ||

| light_client_manager.nim | ||

| light_client_protocol.nim | ||

| light_client_sync_helpers.nim | ||

| request_manager.nim | ||

| sync_manager.nim | ||

| sync_protocol.nim | ||

| sync_queue.nim | ||

README.md

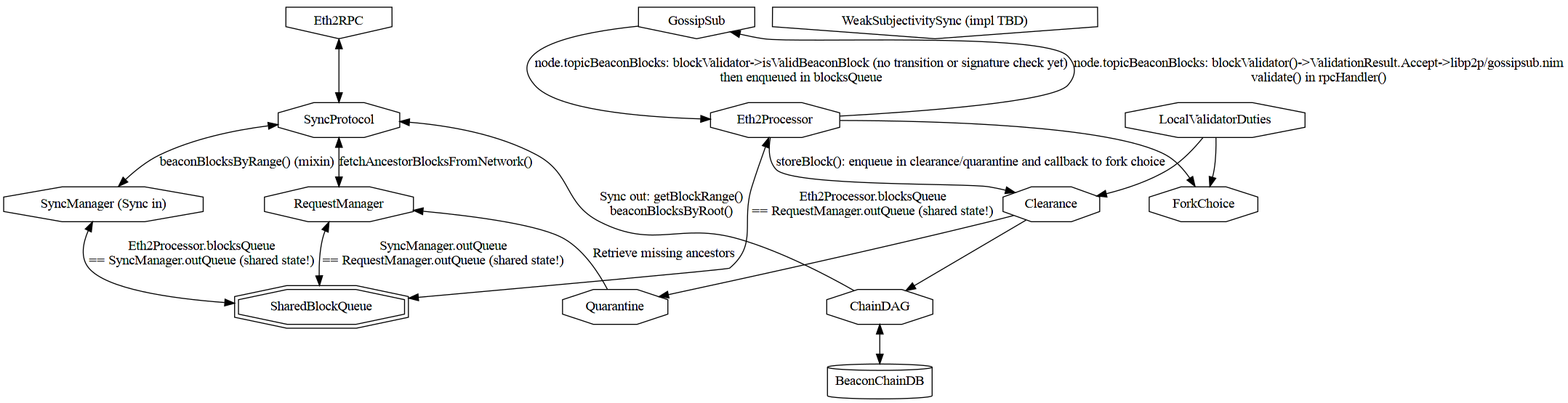

Block syncing

This folder holds all modules related to block syncing

Block syncing uses ETH2 RPC protocol.

Reference diagram

Eth2 RPC in

Blocks are requested during sync by the SyncManager.

Blocks are received by batch:

syncStep(SyncManager, index, peer)- in case of success:

push(SyncQueue, SyncRequest, seq[SignedBeaconBlock]) is called to handle a successful sync step. It callsvalidate(SyncQueue, SignedBeaconBlock)` on each block retrieved one-by-onevalidateonly enqueues the block in the SharedBlockQueueAsyncQueue[BlockEntry]but does no extra validation only the GossipSub case

- in case of failure:

push(SyncQueue, SyncRequest)is called to reschedule the sync request.

Every second when sync is not in progress, the beacon node will ask the RequestManager to download all missing blocks currently in quarantine.

- via

handleMissingBlocks - which calls

fetchAncestorBlocks - which asynchronously enqueue the request in the SharedBlockQueue

AsyncQueue[BlockEntry].

The RequestManager runs an event loop:

- that calls

fetchAncestorBlocksFromNetwork - which RPC calls peers with

beaconBlocksByRoot - and calls

validate(RequestManager, SignedBeaconBlock)on each block retrieved one-by-one validateonly enqueues the block in theAsyncQueue[BlockEntry]but does no extra validation only the GossipSub case

Weak subjectivity sync

Not implemented!

Comments

The validate procedure name for SyncManager and RequestManager

as no P2P validation actually occurs.

Sync vs Steady State

During sync:

- The RequestManager is deactivated

- The syncManager is working full speed ahead

- Gossip is deactivated

Bottlenecks during sync

During sync:

- The bottleneck is clearing the SharedBlockQueue

AsyncQueue[BlockEntry]viastoreBlockwhich requires full verification (state transition + cryptography)

Backpressure

The SyncManager handles backpressure by ensuring that

current_queue_slot <= request.slot <= current_queue_slot + sq.queueSize * sq.chunkSize.

- queueSize is -1, unbounded, by default according to comment but all init paths uses 1 (?)

- chunkSize is SLOTS_PER_EPOCH = 32

However the shared AsyncQueue[BlockEntry] itself is unbounded.

Concretely:

- The shared

AsyncQueue[BlockEntry]is bounded for sync - The shared

AsyncQueue[BlockEntry]is unbounded for validated gossip blocks

RequestManager and Gossip are deactivated during sync and so do not contribute to pressure.